- Maxime Greau

- May 8, 2017

How we migrate 350+ Maven CI jobs to Pipeline as Code with Jenkins 2 and Docker!

A year ago at eXo, we decided to build all our projects in Docker containers.

In this series of articles, we will tell you our story of how we upgraded more than 350 Jenkins “standard” Maven jobs to Pipeline as code on our Continuous Integration servers, using Jenkins 2 and Docker.

This is a good opportunity to revisit the problems we encountered and the solutions we adopted, as well as to examine some best practices around Maven/Gradle/Android builds in Docker containers, all managed by the Jenkins Pipeline as Code pattern.

Content

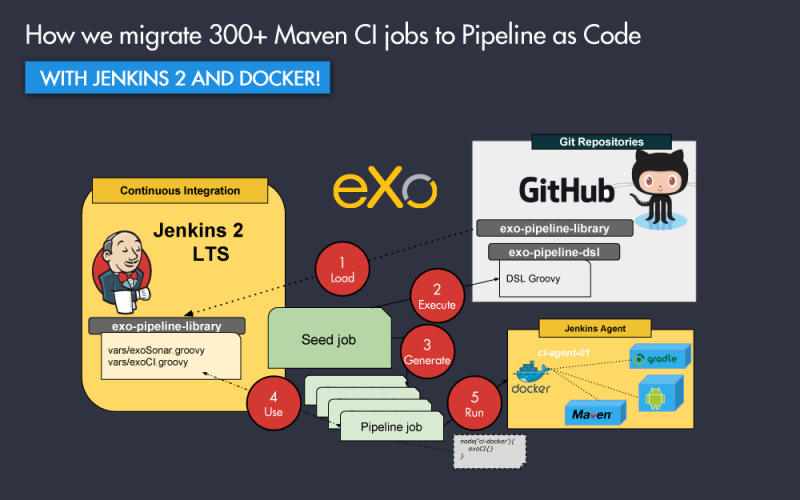

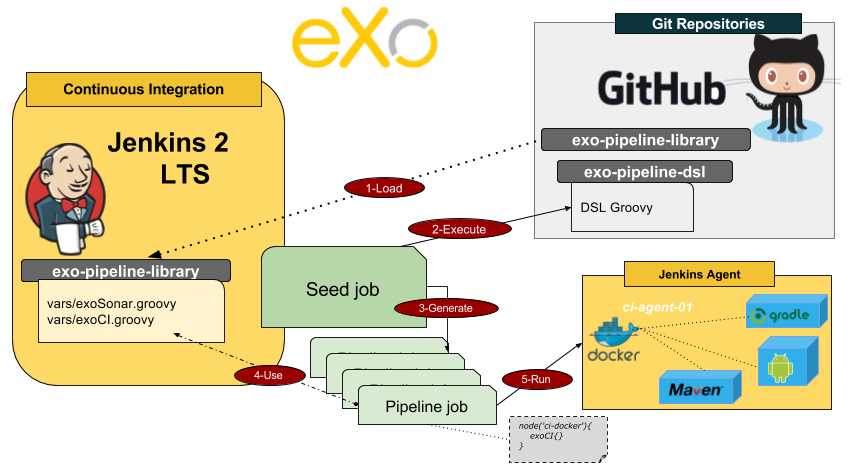

As with all important technical migration, we did it step by step. The 3 major steps were:

- Create CI Docker Images

- Use Jenkins Pipeline & Pipeline Docker plugins

- Generate all Pipeline jobs with DSL jobs

The following diagram represents the workflow for these steps:

In this article, we will explain the context (why we did it), and the first step of the migration towards creating your own CI Docker images.

discover all the features and benefits

What we build

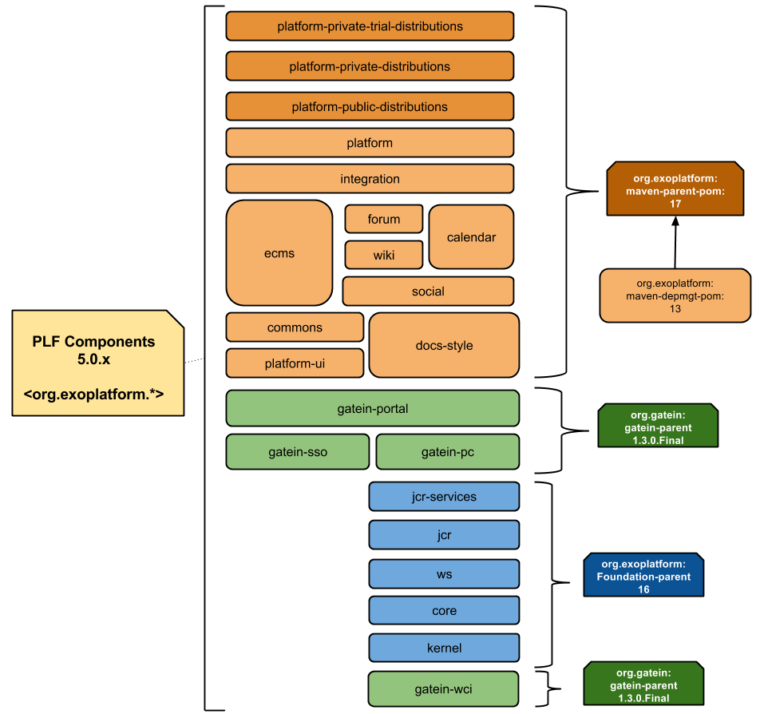

Platform components and add-ons

As the first step we have multiple git repositories for all the components required to create a distribution package for eXo Platform:

- 25+ components to build

- Platform projects (cf. diagram below)

- Juzu projects

- Add-ons

- 15+ supported Add-ons

- 100+ community Add-ons

- Native Mobile projects

- Android Application

- iOS Application

Git Workflow

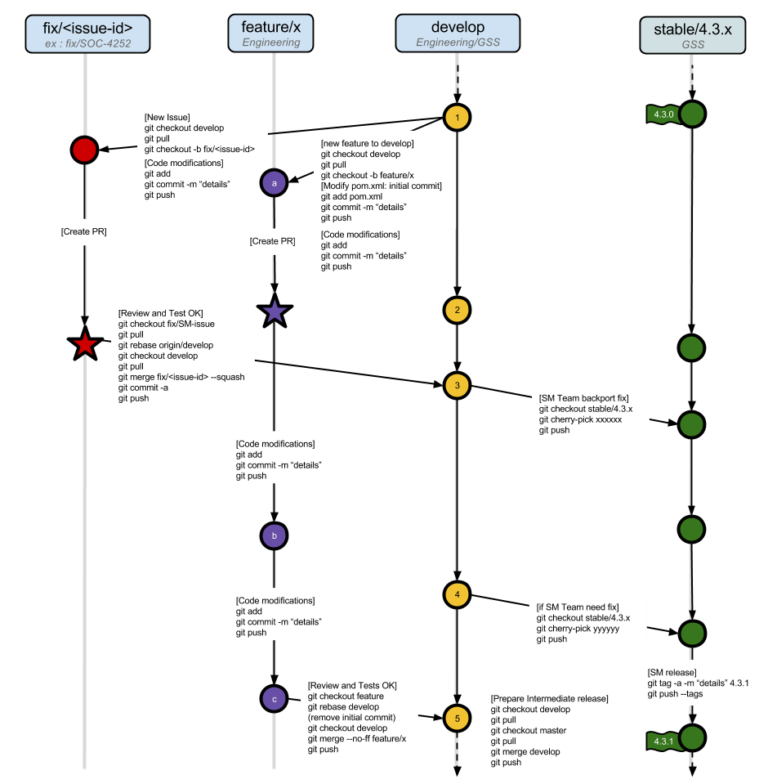

We used a Git Workflow, based on a branching model. All eXo projects are required to follow this model;

- eDvelop : using the latest validated development changes.

- Feature: Feature branches are dedicated branches for one big feature (.

- Stable/: Stable branches are used to perform releases and write or accept fixes. “xxx” is the stable version name (e.g 1.0.x).

- Fix Fix branches are dedicated to integrating bugfix on the Development branch. If needed the fix is then cherry-picked and transferred to a more stable branch.

- integration: Integration branches are branches dedicated to automatic integration tasks (like Crowdin translations, for example).

- POC: POC branches are dedicated branches to developing proof of concept

FREE WHITE PAPER

Types of Digital workplace solutions

The modern workplace has evolved significantly in recent years, with advancements in technology, the growing number of tools …

Platform versions and clients

Over the last 10 years eXo has released many versions of the eXo Platform. With eXo Platform 5 currently in development, in addition to the versions we maintain for our clients (eXo Platform 4.x : 4.0, 4.1, 4.2, 4.3, 4.4), we always need to manage several versions of the Build stack.

We build projects with the JDK6, JDK7 or JDK8, and Maven 3.0, Maven 3.2 or Maven 3.3 versions. The native Android application is built by Gradle.

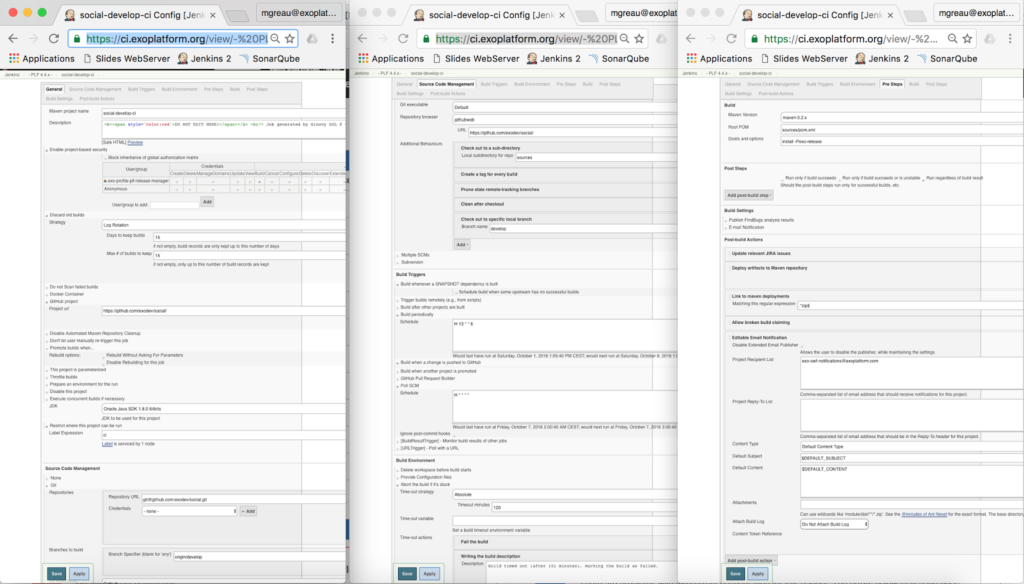

Remember how to create Jenkins job via the UI?

“Jenkins DSL and Pipeline jobs with Docker to the rescue!”

As a result, we have had to manage a lot of builds via the Jenkins UI and many tools in several versions (Maven, JDK, etc.) on CI agents and development laptops.

Finding this inefficient and painful, we decided to find a solution to automate and manage it in a different way.

The container (Docker) technology combined with the emergence of automation tools layered onto Jenkins with Pipeline, and the efficiency of the Job DSL plugin, appeared to be the ultimate solution in the eXo context.

Step1: Create your own Docker Images

Choose a distribution base image

All our CI agents are managed via Puppet and are based on the Ubuntu distribution, so we decided to use a lighter Docker image based on this distribution: the baseimage-docker.

This was a good compromise between a very small image like Alpine, which resulted in problems related to JDK installation, and the official Ubuntu Docker image, which was too big.

FROM phusion/baseimage:0.9.21

LABEL MAINTAINER "eXo Platform <docker@exoplatform.com>"

Define the locale

Who hasn’t had test failure due to encoding issues? It works on your Linux machine, but it fails on your colleague’s laptop running Windows.

Thus, it’s mandatory to define a locale to ensure that all developers will have the same behavior, whatever their operating system, and that it will be compliant with the CI server configuration.

# Set the locale

RUN locale-gen en_US.UTF-8

ENV LANG en_US.UTF-8

ENV LANGUAGE en_US:en

ENV LC_ALL en_US.UTF-8

Create a dedicated CI user

“…take care of running your processes inside the containers as non-privileged users (i.e., non-root)” is one of the most important best practices from the Docker security guide.

In the Jenkins context, it’s important to create a dedicated CI user that will fit the uid:guid of the user used by Jenkins on your server agents.

# eXo CI User

ARG EXO_CI_USER_UID=13000

ENV EXO_CI_USER ciagent

ENV EXO_GROUP ciagent

...

# Create user and group with specific ids

RUN useradd --create-home --user-group -u ${EXO_CI_USER_UID} --shell /bin/bash ${EXO_CI_USER}

Indeed, when Jenkins will execute a Pipeline job, it will have to mount the workspace and other required folders into the Docker container.

For example, Jenkins will execute the following command on the server agent:

$ docker run -t -d -u 13000:13000

-v m2-cache-ecms-develop-ci:/home/ciagent/.m2/repository

-v /srv/ciagent/workspace/ecms-develop-ci:/srv/ciagent/workspace/ecms-develop-ci:rw

-v /srv/ciagent/workspace/ecms-develop-ci@tmp:/srv/ciagent/workspace/ecms-develop-ci@tmp:rw

... -e ******** --entrypoint cat exoplatform/ci:jdk8-maven33

This dedicated CI user will prevent permissions issues with Docker volumes.

You willl notice that we define the EXO_CI_USER_UID variable with the Docker ARG instruction. This is important for the developer experience, which we will explain later in this series of articles.

ENTRYPOINT and CMD

In a Dockerfile, the ENTRYPOINT instruction is an optional definition for the first part of the command to be run. So both ENTRYPOINT or CMD instructions, specified in your Dockerfile, identify the default executable for the Docker image.

The best way is to combine both of them by using CMD to provide default arguments for the ENTRYPOINT.

In the earlier version of the Jenkins Docker Pipeline plugin, Jenkins didn’t use the –entrypoint option when starting containers, so the command was:

$ docker run ... -e ******** exoplatform/ci:jdk8-maven33 cat

In that case, if your Docker Image declared an ENTRYPOINT as startup command, Jenkins was not able to use your image.

That’s why we added a custom script in our Docker Images, as a workaround, which allows one to execute Maven as a startup command, but also to execute the cat command and other commands executed with an absolute path:

Dockerfile

Dockerfile

COPY docker-entrypoint.sh /usr/bin/docker-entrypoint

# Workaround to be able to execute others command than "mvn" as entrypoint

ENTRYPOINT ["/usr/bin/docker-entrypoint"]

CMD ["mvn", "--help"]

docker-entrypoint.sh

docker-entrypoint.sh

# Hack for Jenkins Pipeline: authorize cat without absolute path

if [[ "$1" == "/"* ]] || [[ "$1" == "cat" ]]; then

exec "$@"

fi

exec mvn "$@"

$ docker run ... -e ******** --entrypoint cat exoplatform/ci:jdk8-maven33

Use inheritance to avoid code duplication

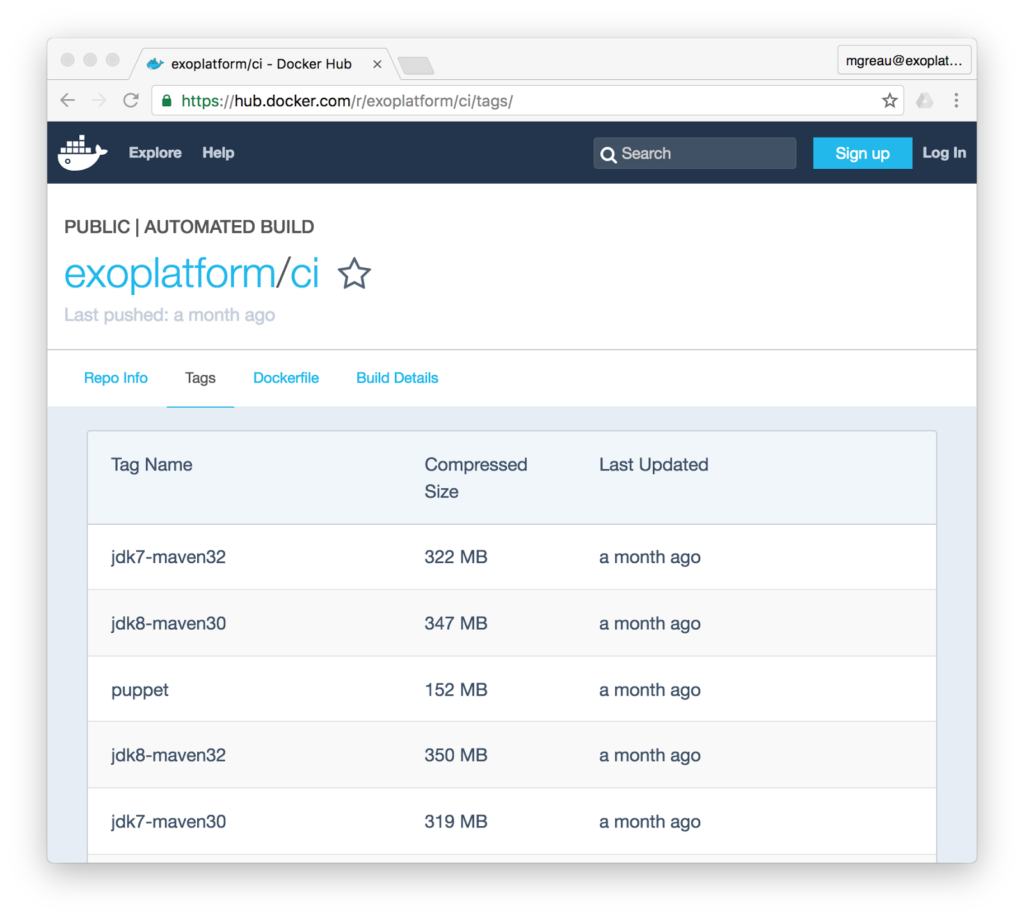

We have created and continue to create Docker images to cover our entire Build Stack. Currently this list contains:

- exoplatform/ci:jdk6-maven30

- exoplatform/ci:jdk7-maven30

- exoplatform/ci:jdk7-maven32

- exoplatform/ci:jdk8-maven32

- exoplatform/ci:jdk8-maven33

- exoplatform/ci:jdk8-gradle2

- …

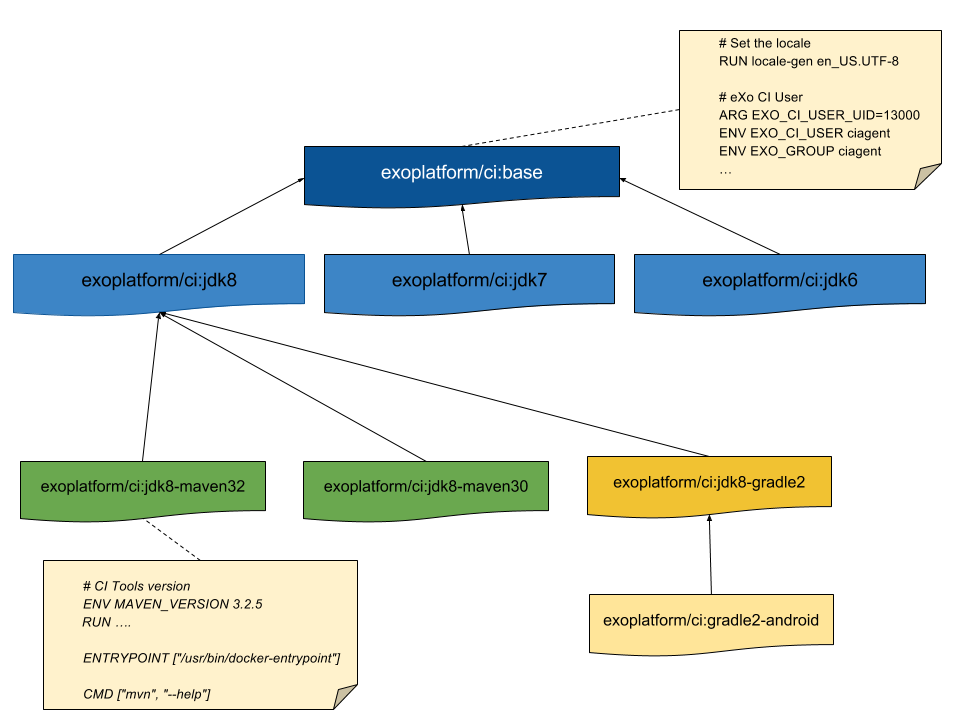

As you can imagine, there are not many differences between all those images, so we have created CI base images at several levels to be able to avoid code duplication as much as possible.

The diagram below shows how those images are organized:

FROM exoplatform/ci:jdk8

# CI Tools version

ENV MAVEN_VERSION 3.3.9

# Install Maven

RUN mkdir -p /usr/share/maven \

&& curl -fsSL http://archive.apache.org/dist/maven/maven-3/$MAVEN_VERSION/binaries/apache-maven-$MAVEN_VERSION-bin.tar.gz \

| tar xzf - -C /usr/share/maven --strip-components=1 \

&& ln -s /usr/share/maven/bin/mvn /usr/bin/mvn

…

# Custom configuration for Maven

ENV M2_HOME=/usr/share/maven

ENV MAVEN_OPTS="-Dmaven.repo.local=${HOME}/.m2/repository -XX:+UseConcMarkSweepGC -Xms1G -Xmx2G -XX:MaxMetaspaceSize=1G -Dcom.sun.media.jai.disableMediaLib=true -Djava.io.tmpdir=${EXO_CI_TMP_DIR} -Dmaven.artifact.threads=10 -Djava.awt.headless=true"

ENV PATH=$JAVA_HOME/bin:$M2_HOME/bin:$PATH

...

# Workaround to be able to execute others command than "mvn" as entrypoint

ENTRYPOINT ["/usr/bin/docker-entrypoint"]

CMD ["mvn", "--help"]

docker run ... -e ******** exoplatform/ci:jdk8-maven33 clean package

docker run exoplatform/ci:jdk8-maven33 /bin/echo hello

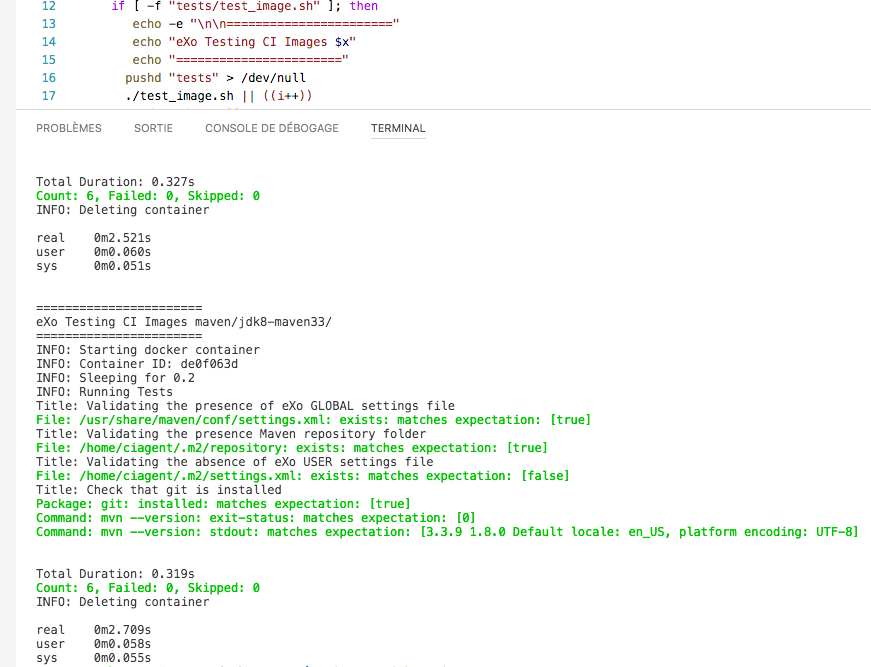

Test your Docker Images

As with other source code, you can create tests suites for your Docker files and images. For the eXo CI Docker images, we use Goss via dgoss.

- Goss is a YAML based tool for validating a server’s configuration

- dgoss is a wrapper around Goss that aims to bring the simplicity of Goss to Docker containers.

The first step is to create a YAML file to describe what you want to test in your Docker container. There are some examples online that can help you to create this configuration file, but it can be also be generated from the goss command line.

For example, say we want to check that some Maven configuration files exist in the container. We want to test that the mvn –version command is compliant with the Maven and JDK versions installed.

goss.yaml

goss.yaml

file:

/home/ciagent/.m2/repository:

title: Validating the presence Maven repository folder

exists: true

/home/ciagent/.m2/settings.xml:

title: Validating the absence of eXo USER settings file

exists: false

/usr/share/maven/conf/settings.xml:

title: Validating the presence of eXo GLOBAL settings file

exists: true

package:

git:

installed: true

title: Check that git is installed

command:

mvn --version:

exit-status: 0

stdout:

- 3.3.9

- 1.8.0

- "Default locale: en_US, platform encoding: UTF-8"

stderr: []

timeout: 0

dgoss run -it exoplatform/ci:jdk8-maven33 cat

Conclusion

If you are interested in using or testing these Docker Images, you will find:

- all the Dockerfiles on the dedicated exo-docker/exo-ci GitHub repository

- all the Docker images on the eXo DockerHub Organization

Feel free to give your feedback about this.

Next step

FREE WHITE PAPER

for the Enterprise

FREE WHITE PAPER

for the Enterprise

Related posts

- All

- eXo

- Digital workplace

- Employee engagement

- Open source

- Future of work

- Internal communication

- Collaboration

- News

- intranet

- workplace

- Knowledge management

- Employee experience

- Employee productivity

- onboarding

- Employee recognition

- Change management

- Cartoon

- Digital transformation

- Infographic

- Remote work

- Industry trends

- Product News

- Thought leadership

- Tips & Tricks

- Tutorial

- Uncategorized

Leave a Reply

( Your e-mail address will not be published)